A Technical Post Mortem and Strategic Roadmap for AI Infrastructure Security

I remember standing in a server room in 2019, watching a junior dev’s face turn pale as he realized a “helpful” Chrome extension had just exfiltrated our production database keys. It was a simple mistake a tool meant to format JSON was actually a wrapper for a credential harvester. Back then, we called it a hard lesson in endpoint management. But in the spring of 2026, when the news broke about the Vercel security incident, I felt that same cold pit in my stomach. The scale was different, the technology was more advanced, but the fundamental failure was identical: we trusted a third party more than we monitored our own identity perimeter.

The Vercel breach didn’t happen because of a zero-day in Next.js or a flaw in the React core. It happened because an employee clicked “Allow All” on an AI tool designed to help them work faster. This is the new reality of software development. We are building systems at machine speed, but our security models are still stuck in a human-centric era. If you are a developer, a CISO, or a student looking at the job market, you need to understand that the “Vercel Wake-Up Call” isn’t about one company’s bad weekend. It is about a structural shift where your identity is the new firewall, and your AI tools are the most likely vector for your next catastrophic failure.

The Domino Effect: How a Gaming Cheat Toppled a Cloud Giant

The real kicker is how mundane the initial compromise was. The entire chain of events that put Vercel’s internal systems at risk started not with a state-sponsored hack, but with a gaming script. In early 2026, an employee at a small AI vendor called Context.ai downloaded what they thought was a cheat for Roblox. Instead, they installed Lumma Stealer. This wasn’t some high-level espionage; it was basic malware that captured browser sessions and corporate credentials from an infected machine.

Once the attacker had a foothold inside Context.ai, they realized they had access to something far more valuable than game items: OAuth tokens. Context.ai “AI Office Suite” was a consumer-grade product that many developers used to automate document creation and email management. By compromising the AWS environment at Context.ai, the hackers were able to exfiltrate valid session tokens for every user of that suite, including at least one Vercel employee who had used their corporate Google Workspace account to sign up.

Mechanical Breakdown: The Anatomy of the Initial Pivot

| Attack Phase | Action Taken | Tool/Mechanism | Impact |

| Initial Infection | Roblox “cheat” download | Lumma Stealer Malware |

Compromised developer machine at Context.ai. |

| Lateral Pivot | AWS Environment access | Exfiltration of OAuth tokens |

Access to 3rd-party integration secrets. |

| Trust Escalation | Token presentation | Google Workspace OAuth |

Attacker inherits “Allow All” permissions. |

| Internal Access | Environment Variable Readout | Vercel Control Plane |

Exposure of plaintext API keys and metadata. |

What most people miss is that this wasn’t a “breach” of Vercel in the traditional sense it was a legitimate use of a stolen trust artifact. When the attacker used the Vercel employee’s OAuth token, Vercel’s systems saw a valid, authenticated user. Because the employee had granted “Allow All” permissions to the Context.ai app, the attacker could effectively walk through the front door of Vercel’s Google Workspace. From there, the pivot to internal Vercel environments was trivial. They began enumerating environment variables that were not marked as “sensitive,” gaining a map of the internal infrastructure and potential access to customer data.

The Outh Trap: Why Your Convenience is a Security Nightmare

Here’s how to spot the red flags before you become the next headline. Most developers treat OAuth permission screens like “Terms and Conditions” they just scroll to the bottom and click “Accept.” But in the AI era, those permissions are the keys to your kingdom. The Vercel incident showed that when an employee grants an AI tool access to “read and write emails” or “manage files,” they are essentially creating a permanent, programmatic backdoor that bypasses MFA.

The hidden mechanics of this failure lie in the persistent nature of OAuth refresh tokens. Unlike a standard login session that might expire in 24 hours, these integration tokens are designed to stay active so the AI tool can work in the background. If the tool vendor is compromised, every organization that ever “integrated” with them is suddenly vulnerable. This is a massive governance gap. Most companies have no inventory of which employees have authorized which third-party AI apps, meaning the attack surface is effectively invisible until the ransom demand hits BreachForums.

Choosing Friction Over Failure

Choosing a restrictive integration policy over a “developer-friendly” free-for-all will save you millions in incident response costs later. I’ve seen teams fight against restricted OAuth scopes because it “slows them down.” But consider the ROI: an extra ten minutes of security review per tool vs. a $2 million ransom demand and 100+ hours of forensic investigation. For any organization scaling AI, the decision to implement a centralized “Agent Registry” is no longer optional. You must know exactly what agents are running, who authorized them, and what specific data they can touch.

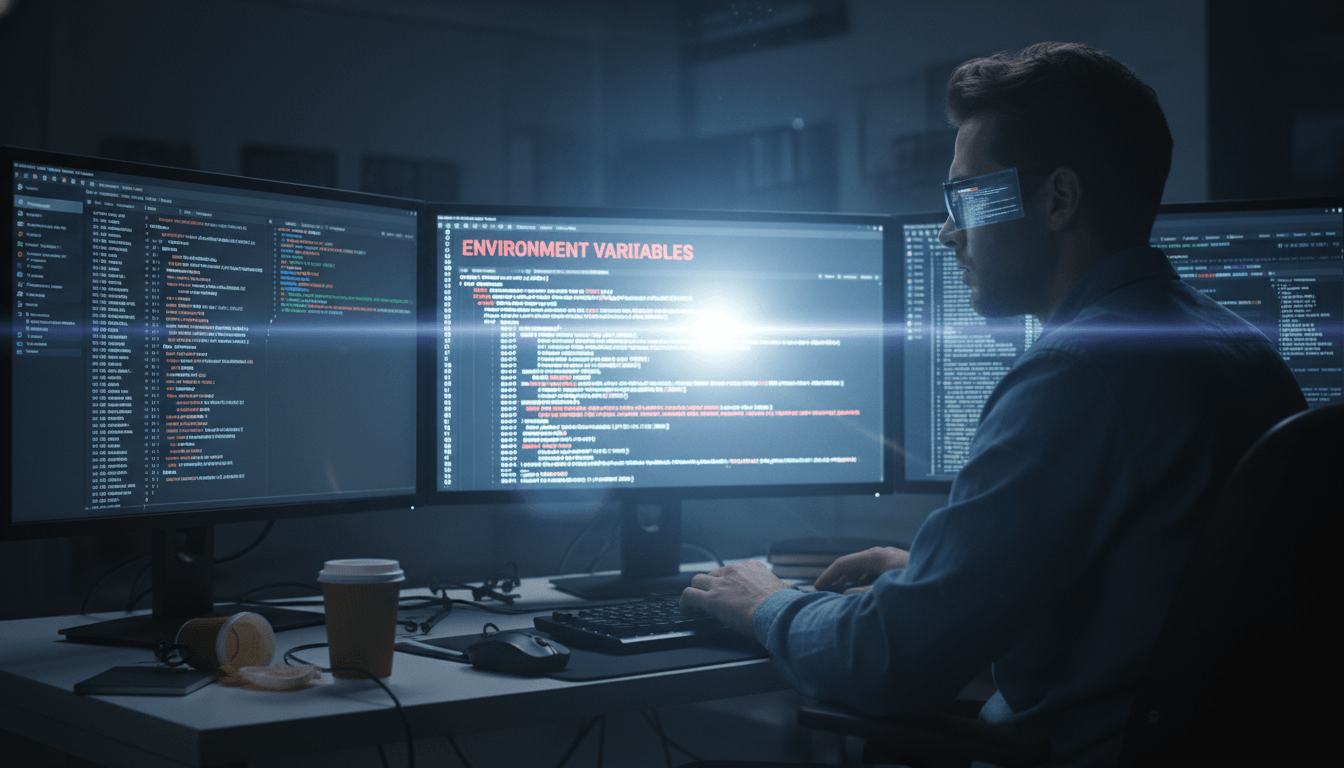

The “Non-Sensitive” Variable Myth: A Blueprint for Lateral Movement

The real story of the Vercel breach isn’t just about how the hackers got in it’s about what they found once they were inside. Vercel’s investigation confirmed that the attackers accessed environment variables that were not marked as “sensitive”. To many, this sounds like a minor leak. In reality, it’s a blueprint for a total system takeover.

In most cloud platforms, “non-sensitive” variables are stored in plaintext and are easily readable by anyone with basic environment access. What developers often put in these “non-sensitive” slots includes internal API endpoints, database schema names, and configuration flags. For a sophisticated attacker, this is exactly what they need to map your internal network and find the “Crown Jewels” the encrypted databases and production secrets.

The Hidden Mechanics of Environment Enumeration

When an attacker gains access to a CLI or a dashboard, their first move is enumeration. They aren’t looking for the password to the main vault yet; they are looking for the path to the vault. Plaintext variables provide that path. By seeing which services talk to which internal IPs, the attacker can identify where the most valuable data lives.

To implement a successful defense, you have to treat every environment variable as potentially sensitive. The step-by-step fix is to enforce encryption at rest for all configuration data and use a secrets manager that requires identity-based “Just-In-Time” access. If a variable doesn’t need to be read by a human, it should never be readable in plaintext through a dashboard.

The Ransom Economy: ShinyHunters and the $2 Million Asking Price

Shortly after the Vercel breach, a threat actor claiming to be part of the ShinyHunters group posted on BreachForums, claiming to have stolen API keys, npm tokens, GitHub tokens, and portions of Vercel’s source code. They even shared a sample dataset of 580 employee records as “proof”. The asking price? $2 million.

While Vercel maintained that the impact was limited to a “limited subset” of customers, the market reaction was one of pure anxiety. The real damage from these incidents is rarely the data itself; it’s the erosion of trust in the platform. If developers start to wonder if their npm packages or deployment pipelines are safe, they will move to competitors. For a platform like Vercel, which powers a massive portion of the web via Next.js, the reputational ROI of a “security-first” posture is immeasurable.

Impact Statistics: The Rising Cost of AI-Powered Breaches (2025-2026)

| Metric | 2024 Average | 2025/2026 Average | Trend |

| Cost of Data Breach | $4.44 Million | $5.72 Million |

+29% |

| Time to Detect (AI-Secured) | 185 Days | 108 Days |

-42% |

| AI-Related CVE Growth | 1,582 | 2,130 |

+34.6% |

| Ransom Demands (Median) | $800k | $1.8 Million |

+125% |

The data shows a clear divergence: companies that invest in AI-powered security detection are saving millions by catching these breaches months earlier. But the “Pacesetters” the top 13% of organizations are the ones who aren’t just reacting; they are building security into the very fabric of their AI infrastructure.

The Hidden Risks of AI Infrastructure Debt

The Vercel incident is a classic example of “AI Infrastructure Debt.” This is the accumulation of hidden costs when teams prioritize feature velocity over architectural integrity. When you “layer” AI on top of legacy systems with disconnected processes and messy data, you aren’t just adding intelligence you’re adding a magnifying glass to your existing vulnerabilities.

Why Technical Debt Kills AI Scalability

AI isn’t a patch for broken systems; it’s an accelerator. If your data pipelines are brittle or your access controls are coarse-grained, an AI agent will simply find and exploit those flaws faster than a human ever could. The hidden debt in the Vercel case was the reliance on a single employee’s OAuth permissions to bridge the gap between a consumer tool and enterprise systems.

I’ve seen large enterprises forced to invest $500 million just to modernize their infrastructure enough to support their AI goals, only to find that their accumulated debt meant they could only finish half the project in three years. To avoid this, you must treat your enterprise architecture as a strategic asset. Choosing a unified platform for agent management over a collection of “one-off” tools might cost more upfront, but it prevents the “sprawl” that leads to breaches like the one we saw in April 2026.

The Lifecycle Costs of “Shadow AI”

Every time an engineer signs up for a new AI coding assistant or data analysis tool without approval, they are adding to your organization’s “Shadow AI” debt. The real cost isn’t just the $20/month subscription; it’s the potential for a $5 million data breach. In a remote-first world, you can’t stop people from using tools, but you can provide them with a “paved path” a set of pre vetted, secure AI services that integrate seamlessly with your identity provider. This reduces the friction that drives people toward unsanctioned, high-risk alternatives.

Hardening the Foundation: The NIST AI RMF Playbook

If you want your AI infrastructure to be resilient, you have to stop guessing and start following global standards. The NIST AI Risk Management Framework (RMF) is the industry benchmark for a reason. It moves security from a “checklist” to a core business function through four main pillars: Govern, Map, Measure, and Manage.

Step-by-Step Implementation of NIST AI RMF for Developers

-

Govern: Create an AI Oversight Committee. This isn’t just for the C-suite; it needs to include your lead architects and security engineers. Their job is to define who is accountable when an agent makes a mistake or a tool leaks data.

-

Map: Catalog every AI system in use. You can’t protect what you can’t see. Use automated tools to discover “Shadow AI” agents across your cloud and SaaS platforms.

-

Measure: Run regular red-teaming exercises. Don’t just scan for CVEs; simulate a “Context.ai” style attack where an attacker has a valid OAuth token. See how far they can get before your monitoring picks them up.

-

Manage: Implement “Kill Switches.” If an agent starts making 500 API calls a minute or accessing sensitive customer records, your system should automatically isolate it without waiting for a human to wake up at 3 AM.

The ISO 42001 Advantage

While NIST is a voluntary framework, ISO 42001 is the certifiable gold standard for AI Management Systems (AIMS). Adopting this doesn’t just make you more secure; it gives your enterprise customers the confidence they need to sign that seven-figure contract. It forces you to document your “system cards” explaining exactly what your models do, how they were trained, and what the risks are. In 2026, this level of transparency is becoming a requirement for any company operating in the global market.

Engineering the “Unbreakable” Agent: Sandboxing and MicroVMs

If the Vercel employee had been running their AI tools in a properly sandboxed environment, the stolen OAuth token would have been a dead end for the attacker. The problem with standard Docker containers is that they share the host kernel. If an AI agent generates malicious code or if a hacker takes over the agent it’s relatively easy to “escape” the container and gain access to the underlying server.

Firecracker and the Move to MicroVMs

The solution is hardware-level isolation via MicroVMs like Firecracker. This is what the big players use to run untrusted code at scale. Every time an agent performs a task, it should be in its own ephemeral VM that is destroyed the second the task is finished.

| Isolation Level | Mechanism | Security Level | Best For |

| Docker Containers | Process-level (Shared Kernel) | Moderate | Trusted internal code. |

| GVisor | User-space kernel (Syscall interception) | High |

Defense-in-depth for web apps. |

| Firecracker MicroVMs | Hardware-level (KVM) | Gold Standard |

Untrusted AI agents and tool use. |

| Cloud Sandboxes (E2B) | Managed MicroVMs | High |

Fast-moving agentic startups. |

Implementing Network Egress Filtering

The real kicker is that most sandboxes allow wide-open internet access by default. An agent writing a Python script has no reason to call an unknown IP in a foreign country. You must implement “Default-Deny” egress filtering. Your sandbox should only be able to talk to the specific APIs it needs to function (e.g., your database or a specific OpenAI endpoint). If an agent tries to exfiltrate data, the network layer blocks it instantly, and your SOC gets an alert.

The Identity Pivot: From Passwords to Ephemeral Tokens

The Vercel incident proved that static credentials are a liability. If a secret exists in an environment variable for months, it’s eventually going to leak. The future of AI infrastructure is “Identity-Based and Ephemeral”.

The Strategy: Just-In-Time (JIT) Secrets

Instead of giving an agent a permanent API key for your database, you give it a “Token Exchange” mechanism. When the agent needs to perform a task, it requests a temporary credential that is valid only for that specific session and that specific operation.

What most people miss is that this requires a “Unified Identity Platform.” You have to be able to trace an agent’s actions all the way back to the human who initiated the request. If an agent deletes a production database, you shouldn’t just see “Service_Account_AI” in the logs; you should see “Junior_Dev_A through Agent_X”. This level of accountability is what keeps the $2 million ransoms at bay.

Scaling Globally: ROI and the Business Case for Security

I often hear developers say that “security is a cost center.” That’s old-school thinking. In the AI era, security is a “revenue enabler.” If your platform is perceived as insecure, you can’t sell to banks, healthcare providers, or government agencies. The ROI of proactive security investment is found in the “Performance Gap” between leaders and laggards.

Cost Comparison: Managed vs. Self-Hosted Security

Choosing to self-host your AI infrastructure to “save money” is often a trap. When you account for the hidden labor costs of maintenance, security patching, and CVE response, a managed solution like Azure OpenAI or Vertex AI is almost always cheaper for small and medium teams.

-

Managed Hosting ($24-$50/mo): Zero DevOps, automatic patches, predictable costs.

-

Self-Hosted VPS ($10-$50/mo + Labor): Requires Docker expertise, 2-4 hours of maintenance per month, manual patching.

-

Hidden Labor Cost: If your dev’s hourly rate is $100, and they spend 5 hours a month “babysitting” a self-hosted AI server, your real cost is $500/month ten times the price of a managed service.

Self-hosting only makes economic sense at massive scale (e.g., >10M tokens per day) or when you have extreme regulatory requirements that forbid any cloud data transfer. For everyone else, “Managed” is the path to both security and scalability.

The Professional Roadmap: Careers in the AI-Security Intersection

If you are looking to build a career in tech right now, “AI Security Specialist” is the most secure path you can take. The market is facing a desperate shortage of people who understand both large language models and network defense.

Essential Skills for the 2026 Job Market

To thrive, you need to move beyond “traditional” cybersecurity. Simply knowing how to run a port scan isn’t enough. You need to understand:

-

Adversarial ML: How to prompt-inject a model and, more importantly, how to build a “firewall” against it.

-

MLSecOps: How to secure the entire AI pipeline, from data collection to model deployment.

-

Agentic Orchestration: Understanding protocols like MCP and how to sandbox autonomous agents in production.

-

Governance & Ethics: Helping companies navigate the legal minefield of the EU AI Act and NIST frameworks.

| Role | Median Salary (2025) | Core Certifications | Key Skill |

| AI Security Engineer | $185k | CAISP, AWS ML Specialty |

Sandboxing & Red-Teaming. |

| MLSecOps Architect | $210k | CISSP + AI Coursework |

Pipeline Security & CI/CD. |

| AI Compliance Officer | $160k | ISO 42001 Auditor |

Regulatory Mapping & Audits. |

| AI Red-Team Lead | $195k | CEH + LLM Specialization |

Prompt Injection & Jailbreaking. |

The “Real Talk” Reality Check: What You Must Do Now

The Vercel incident wasn’t a fluke; it was a preview. Attackers are already using AI to accelerate their own workflows phishing campaigns have surged 1,265% since the introduction of generative tools. They are moving with a “surprising velocity” and a “detailed understanding of internal systems” that suggests they are using AI to analyze stolen source code in real-time.

Immediate Action Plan for Tech Leaders

-

Stop the Bleeding: Revoke all broad-scope OAuth tokens immediately. Force a re-authentication of every third-party AI tool and limit permissions to the absolute bare minimum.

-

Encrypt the Config: Move your environment variables out of plaintext. Use a dedicated secrets manager that provides encrypted, identity-aware access for both humans and machines.

-

Mandate MFA Everywhere: If you don’t have hardware-based MFA (like YubiKeys) for your core dev team, you are essentially inviting a Context.ai-style compromise.

-

Audit the Supply Chain: Before you integrate a new AI tool, ask for their ISO 42001 certification or a detailed AI security audit. If they can’t provide one, they are a liability, not an asset.

-

Build a Sandbox Culture: Treat every AI-generated script as untrusted code. Run all agent tools in ephemeral, network-isolated MicroVMs. This isn’t “over-engineering” it’s the only way to scale safely.

The hidden mechanics of the AI era are simple: the speed of your innovation must be matched by the strength of your foundation. Vercel is a sophisticated company with a world-class security team, and they still faced a crisis. Your organization is likely even more vulnerable. The lesson is clear: don’t wait for a $2 million ransom demand to start taking AI infrastructure security seriously. Build for trust, verify every identity, and sandbox every action. That is how you survive the AI revolution.